Farhan Humayun, University of Turku. farhan.humayun@utu.fi

The future of Earth observation

We are entering a transformative era in Earth Observation (EO), where data is no longer just collected but intelligently fused, enhanced, and interpreted across diverse sensors and AI systems. Thanks to the rapid advancements in satellite and UAV-based remote sensing technologies, vast quantities of EO data are now available, enabling the monitoring of Earth’s surface dynamics in unprecedented detail.

In parallel, Generative AI models, particularly Transformers and Diffusion networks, are revolutionizing how we integrate and interpret data across different modalities. These models offer the ability to synthesize information, bridge missing data, and enhance low-quality features, unlocking new opportunities in EO.

However, these advancements raise essential questions:

- How can we intelligently fuse heterogeneous data from sensors like multispectral, hyperspectral, SAR, and LiDAR?

- How do we ensure precise alignment and feature matching across different resolutions and perspectives?

- What new insights can fusion techniques unlock for applications in hydrology, forestry, and beyond?

- How can Generative AI improve remote sensing workflows—and what are its limitations?

At the Department of Computing, University of Turku, within the Digital Waters (DIWA) Flagship, we are addressing these questions at the intersection of Remote Sensing and Computer Vision, leveraging deep learning to develop novel tools for EO applications in forestry, hydrology, and potentially robotics. Some of our recent efforts are detailed below.

Deep learning meets Interferometric SAR (InSAR)

Synthetic Aperture Radar (SAR) sensors are among the most powerful EO tools available today. Their active microwave signals allow imaging through clouds, in all weather conditions, and even at night; capabilities that optical sensors lack. SAR data consists of amplitude and phase information, and Interferometric SAR (InSAR) techniques exploit the phase component to detect minute surface changes over time.

We are developing machine learning-enhanced InSAR pipelines to:

- Improve phase unwrapping for accurate Digital Elevation Models (DEMs)

- Generate high-coherence interferograms using optimized pre-processing and filtering

- Compensate for variability due to soil moisture, vegetation, and precipitation

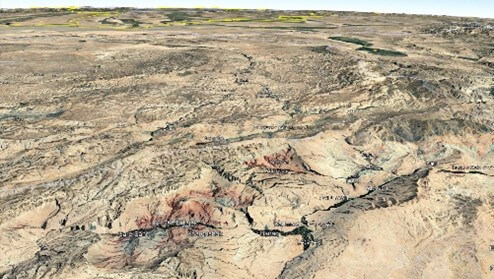

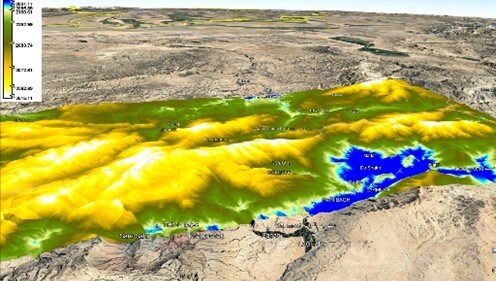

These enhanced DEMs will support applications such as precise vegetation height and volume estimation, topographic watershed mapping, and generation of 3D environments for UAV/robotic SLAM applications. Initial results of the DEM generation are shown in Figure 1.

Figure 1. DEM Generation using High Coherence Sentinel-1 Image Pairs (Background map: Courtesy of Google Earth).

High-resolution aerial remote sensing with UAVs

UAV-based remote sensing offers a cost-effective and agile alternative to satellite-based imaging. UAVs can carry a range of sensors from standard RGB cameras to stereo systems, hyperspectral imagers, and even LiDAR. Low-altitude UAV flights (100–200m) can capture ultra-high-resolution data, ideal for applications where detail and localization are critical.

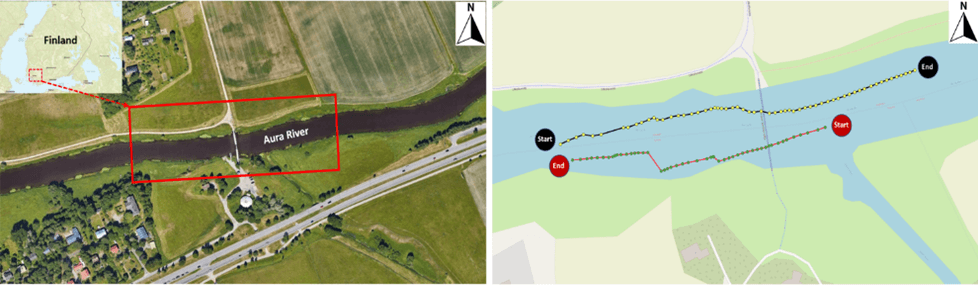

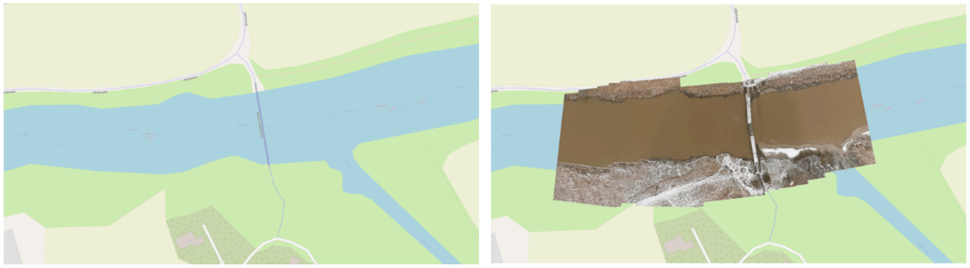

As part of our DIWA doctoral pilot, we conducted a UAV survey of the Aura River in Finland. The goal was to produce detailed orthomosaics of the river channel from nadir-viewing imagery, enabling precise spatial analysis. As an initial experiment, the UAV was equipped with an RGB camera, and our study area included a diverse set of surface features, including paved and vegetated ground, a clearly defined river channel, and a pedestrian bridge with variable lighting and surface reflectances. Capturing over 100 overlapping frames, we applied robust feature detection and matching algorithms to generate an accurate orthomosaic, which was then georeferenced for GIS compatibility.

The availability of multi-sensor remote sensing data brings both opportunities and challenges for accurate, timely, and scalable Earth observation. Unlocking the full potential of this data requires not just integration, but intelligent multi-sensor fusion that respects the physics, geometry, and statistical structure of each modality.

Our ongoing research aims to bridge this gap by exploring how deep learning and generative AI can serve as enablers for robust fusion pipelines. Whether it’s through improving InSAR-based DEM generation, refining orthomosaics from UAV imagery, or enhancing under-sampled regions via generative models, our goal is to develop multi-sensor EO systems that are accurate, resilient, and scalable.

The convergence of Generative AI, sensor-aware learning, and remote sensing physics has the potential to redefine how we extract insight from Earth data. We are particularly interested in how ViT-based architectures, diffusion models, and cross-modal embeddings can be used to co-register, interpolate, and interpret data across various temporal and spatial scales. The overall objective is to develop robust models that generalize across sensors and environmental conditions while maintaining interpretability and trustworthiness.

Interested in collaborating?

“If you’re working at the intersection of remote sensing, AI, or sensor fusion, I’d love to connect. Let’s discuss how we can build smarter, AI-augmented Earth observation systems together.” – Farhan Humayun

3.7.2025.