Haizhou Zhang, University of Turku. hazhan@utu.fi

Have you ever wondered how robots can help protect our environment?

Imagine drones flying above rivers and autonomous boats sailing smoothly on the water, all equipped with cutting-edge sensors working together. This isn’t science fiction—it’s today’s technology revolutionizing environmental monitoring. My research at the University of Turku is all about making this futuristic vision a reality by combining multiple robots equipped with diverse sensors to better understand and protect our natural surroundings.

Why Multi-Sensor Integration Matters

Traditional environmental monitoring methods often rely on single-sensor systems, such as cameras or simple water sampling devices. While these methods have served well, they have clear limitations. For example, cameras alone might struggle to detect underwater features, and water sensors cannot monitor large areas quickly.

This is where multi-sensor systems excel. By integrating various sensors like LiDAR (laser scanning), high-resolution cameras, and inertial measurement units (IMUs), we achieve a far more detailed and comprehensive view of the environment. For instance, LiDAR can map terrain accurately, while cameras provide visual detail and IMUs ensure precise navigation even in challenging conditions.

How Robots Work Together

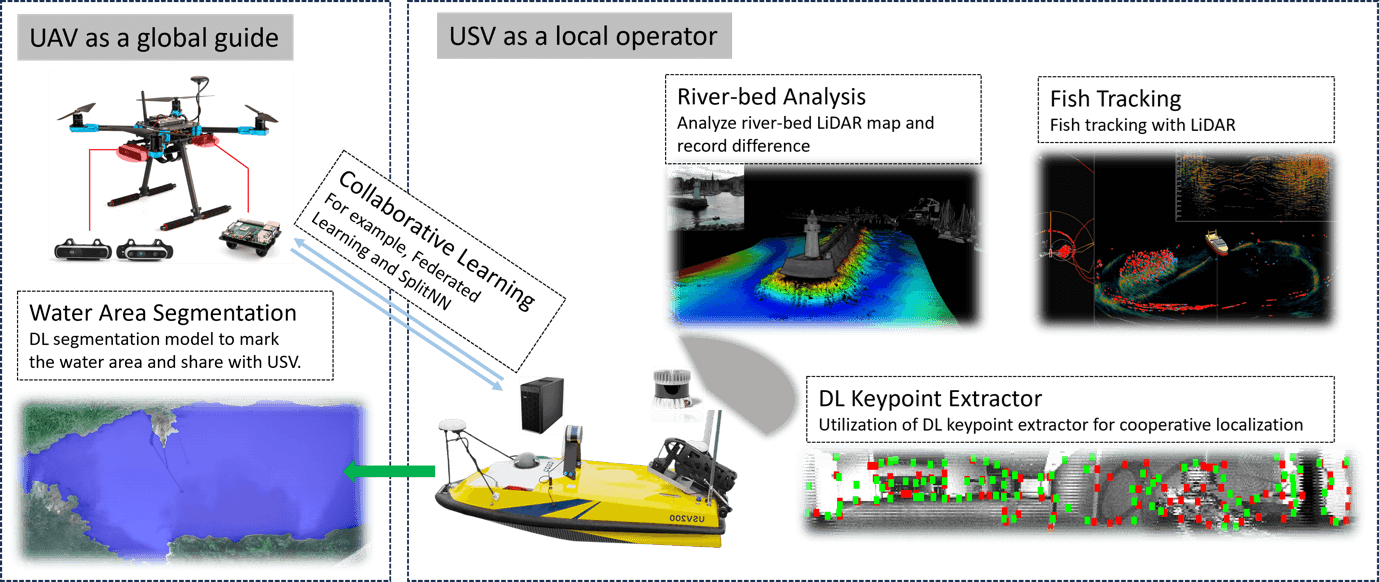

In the sketch shown above, we envisioned a collaborative robot system: Unmanned Aerial Vehicles (UAVs)—essentially drones—and Unmanned Surface Vehicles (USVs)—autonomous boats—cooperate. UAVs provide aerial coverage and can rapidly survey large areas, identifying critical points of interest from above. Meanwhile, USVs operate closely on water, carrying out detailed inspections and deploying underwater sensors.

Through collaborative frameworks, these robots share information instantly. For example, drones identify suitable areas for boats to investigate further, directing their attention effectively. This teamwork significantly enhances situational awareness, ensuring accurate data collection and quick responses to environmental changes.

Real-Life Applications and Benefits

Consider river monitoring: Traditionally, surveying a riverbed or tracking fish populations requires significant resources. With our robotic systems, UAVs quickly identify regions of interest, while USVs equipped with LiDAR and underwater cameras investigate detailed conditions beneath the surface, such as riverbed erosion or fish habitats.

Additionally, deploying these multi-robot systems in remote areas or during environmental emergencies, like oil spills or natural disasters, can provide rapid and comprehensive data, helping authorities respond faster and better informed.

Key Technical Components

1. multi-modal Sensor Fusion

Integrating LiDAR point clouds with camera images and IMU data builds a unified 3D model of the environment. Accurate calibration and time synchronization are critical—misaligned data can lead to false positives in change detection or object identification.

2. Deep Learning for perception

We leverage convolutional neural networks for detection. For example, on Unmanned Aerial Vehicles (UAVs), segmentation highlights shoreline boundaries; on USVs, it identifies underwater conditions, like vegetation and debris. These techniques convert raw sensor feeds into actionable insights for environment assessment.

Looking Ahead

By combining multi-sensor systems on multiple robots, environmental monitoring can become more adaptive, scalable, and secure. Future work will extend this approach to quadruped robots for shoreline inspections and explore satellite data integration for wider-area context. The potential impact spans freshwater conservation, marine ecology, and disaster response.

References

Neuvition. (21.4.2023). Enhancing Environmental Management with LiDAR System Technology. https://www.neuvition.com/media/enhancing-environmental-management-with-lidar-system-technology.html

Zhang, H., Yu, X., Ha, S., & Westerlund, T. (2023). LiDAR-Generated Images Derived Keypoints Assisted Point Cloud Registration Scheme in Odometry Estimation. Remote Sensing, 15(20), 5074. https://doi.org/10.3390/rs15205074

16.5.2025.